Had the same thought, but I'm sure Layers 1-4 operate differently.This is going to date me . . . but that is reminiscent of Token Ring topology. Haven't seen those in quite a while.

Welcome to Tesla Motors Club

Discuss Tesla's Model S, Model 3, Model X, Model Y, Cybertruck, Roadster and More.

Register

Install the app

How to install the app on iOS

You can install our site as a web app on your iOS device by utilizing the Add to Home Screen feature in Safari. Please see this thread for more details on this.

Note: This feature may not be available in some browsers.

-

Want to remove ads? Register an account and login to see fewer ads, and become a Supporting Member to remove almost all ads.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

HW4 & HW5 Discussion

cab

Active Member

Interesting that the example shows "radars" (plural) - could just be a random example of course, but...New patent app/ expansion with a loop network topology for redundancy. Example diagrams have radar, lots of radar (not that it means anything).

https://image-ppubs.uspto.gov/dirsearch-public/print/downloadPdf/20230042642

Gen4 Autopilot approved in Europe

Tesla approved to sell Model S and Model X in Europe with Hardware 4 (HW4/AP4)

Tesla approved to sell Model S and Model X in Europe with Hardware 4 (HW4/AP4)

New inverter etc. coming at the sometime:

Tesla is preparing to launch its new Autopilot hardware 4.0 upgrade

Tesla is preparing to launch its new Autopilot hardware 4.0 upgrade

Bi-directional Inverters?New inverter etc. coming at the sometime:

Tesla is preparing to launch its new Autopilot hardware 4.0 upgrade

Maybe, but the Highlander didn't have those areas covered, unless more up front which is also a good viewpoint.Could bumper cameras be on the side to aid Chuck Cook turns?

I always wondered if they need more cameras inside the cabin - for the self drive fleet.

The charger is the part that would need to be bidirectional. Drive units already regen.Bi-directional Inverters?

For V2G?The charger is the part that would need to be bidirectional. Drive units already regen.

V2X can be done with an external inverter now (supercharging in reverse).For V2G?

For the car to output AC would require changes to the built-in charger.

The drive inverter charges in the quoted link are orthogonal to either option.

willow_hiller

Well-Known Member

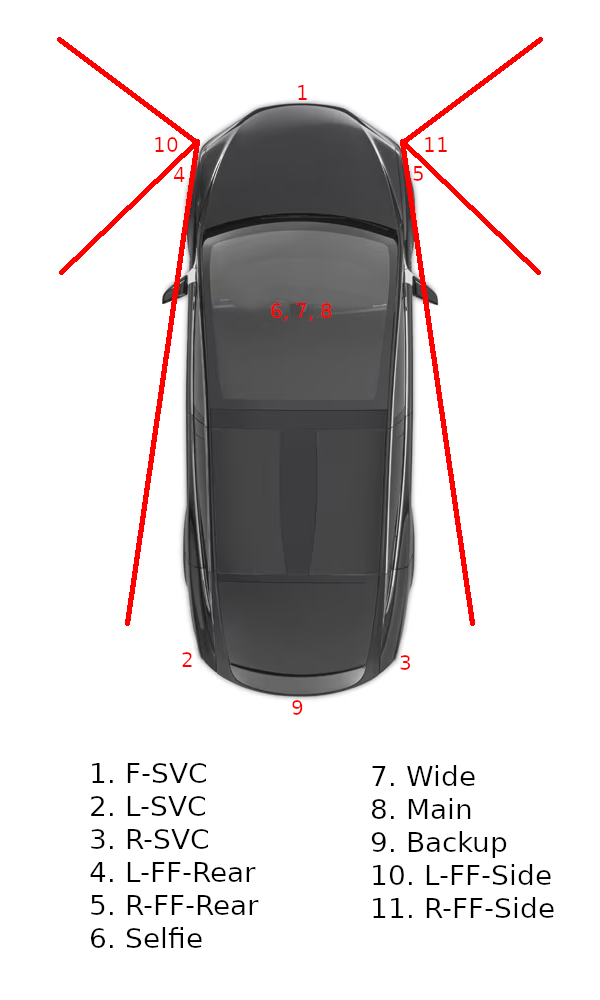

Trying to wrap my head around the camera positions based on the names. I looked up "SVC" in the Model X EPC, and it can refer to both front and rear bumpers. I put this diagram together so we could start piecing things together. Thoughts on these guesses?

It's a little weird to have 1 on the front fender and 2/3 on the rear bumper, but I couldn't come up with another configuration that made sense with "FF" possibly being "Front Fender."

It's a little weird to have 1 on the front fender and 2/3 on the rear bumper, but I couldn't come up with another configuration that made sense with "FF" possibly being "Front Fender."

Last edited:

willow_hiller

Well-Known Member

Green is skeptical it would be in the headlights due to glare. Also it would be hard to have rear facing cameras from that recess:

My guess is they'll look something like the current repeaters, but mounted at the far front corners of the front fender and containing one camera pointing sideways and one pointing backwards.

Elon said only high resolution radar was worthwhile. To achieve high resolution requires a large aperture vs frequency.That's what the first guy guy at the Tesla lunch table said...

I'm only offering what I believe is possible. Sure the camera chip might be a bit bigger or something, but they are already operating at the camera hardware pixel level, I can only imagine what the cameras can do if they really tried.

So this topic is a prime candidate for a Engineering thread. If anyone wants to explore it more let me know. This is about conceptually using a strobed floodlight to see further/farther and possibly higher than oncoming cars, where the radar is a shared technology between the front light panel and the vision cameras - for CyberTruck. Again, just an idea. Maybe someone has a better explanation for adding radar back in (convincing us all they didn't need it) and it being gigantic in size much like a floodlight. Extra cameras too? Hmm... aliens coming soon...

The new radar is 76GHz or so, visible light is 400 to 800 THz. Unit active width is around 100mm so about 25x the 4 mm wavelength.

Ya, I didn't know they had those specific details out there. I del in the main thread.Elon said only high resolution radar was worthwhile. To achieve high resolution requires a large aperture vs frequency.

The new radar is 76GHz or so, visible light is 400 to 800 THz. Unit active width is around 100mm so about 25x the 4 mm wavelength.

View attachment 907916

Thanks, for the info.

willow_hiller

Well-Known Member

There's a good video in the replies to that tweet. When you see more angles, it looks more like a reflector for a LED light than a camera:

Agree, but it's the first part of that process (camera to vector space) that is the high effort portion *if* the feeds need recollected, relabled, and retrained. Though, with all the improved infrastructure, it's less human effort than previously needed.On the topic of whether or not HW4 and HW3 can integrate, heres my perspective. I've been coding for 40 years, C++ for 30+ years.

If Tesla have any idea how to do software engineering (and clearly they do), then there is likely a very definite modular break between the neural net code that does object recognition and image-based distance approximation (basically working out what is where), and the NN for deciding what actions to take, which lane to be in, what speed to set, where to face the vehicle etc...

In other words, some code creates a 'world view' likely saying 'object X,Y and Z are at these positions, with this percentage of confidence', and then the decision making code decides how to handle the vehcile given this data.

The second set (decision and driving) can be totally independent of how the first set of data is determined. In fact we KNOW this is how tesla do it, because they used to be pure NN for object recognition and pure C++ for decisions. Now its a bit of NN in the decision code.

I assume HW3 will be able to say 'objects X,Y,Z at these positions, 90% confidence'. HW4 will say 'X,Y,Z with 98% confidence'. As far as the decision code is concerned, it doesn't even have to know if it has HW3 or 4 installed. They MUST work this way, because the number of cameras may decrease in real time due to hardware failure, or blindness/obfuscation of a camera by sunlight or dirt/dust.

So I dont think there is much concern that HW3 will not be able to use a lot of HW4 code. I dont see this as an issue at all. What IS an issue is whether or not the confidence level from HW3 sensors is sufficient to enable hands-free FSD. Thats the only worry, from an investor POV.

In general I think its worth thinking about HW4 and HW3 more as 'sensor suite 3' and 4, as thats likely the biggest real difference.

IANAL.

Similar threads

- Replies

- 97

- Views

- 6K

- Replies

- 69

- Views

- 6K

- Replies

- 9

- Views

- 1K

- Replies

- 49

- Views

- 4K