Every so often a family friend will send me a link about Tesla crashes on autopilot and how dangerous my new car can be. I've always thought that these must be people who are doing really crazy things, until I took a road trip from San Diego to Dallas that took me through some curvy mountain roads. There I repeatedly noticed that the map and driving direction deviate in such a way, that the driver can be easily confused and drive OFF the road, into a median barrier or worse into oncoming traffic. When you are tired and it's dark, that's when having this kind of mismatch can be particularly confusing and dangerous. Imagine being tired and suddenly noticing a map that looks like you are driving off the road and need to course correct when you don't.

Has anyone else noticed this issue?

I don't ever see such a problem with Waze which also uses the Google Maps data base or even Google Maps driving instructions. It seems to be an issue ONLY with Tesla's rendition of Google mapping.

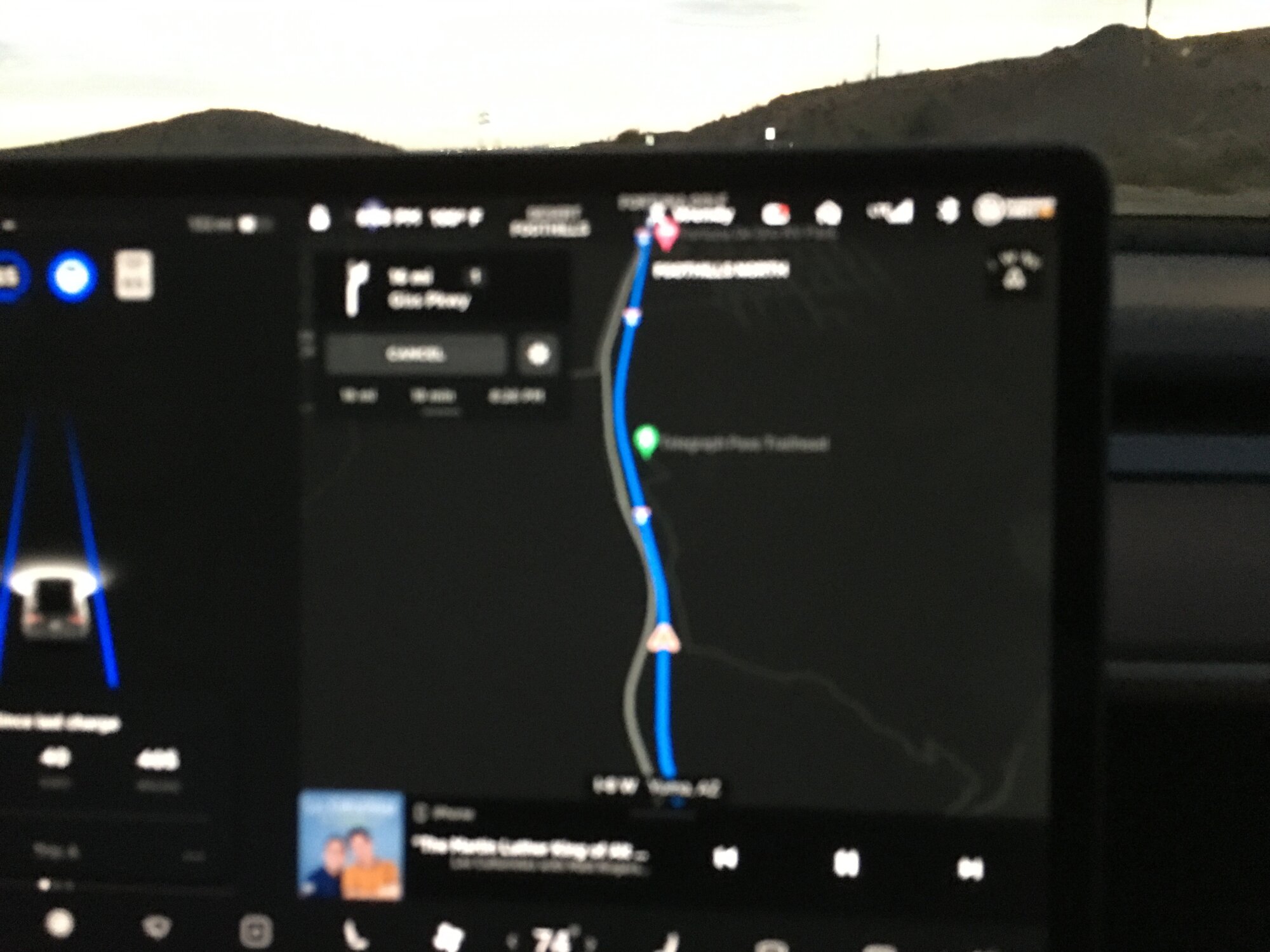

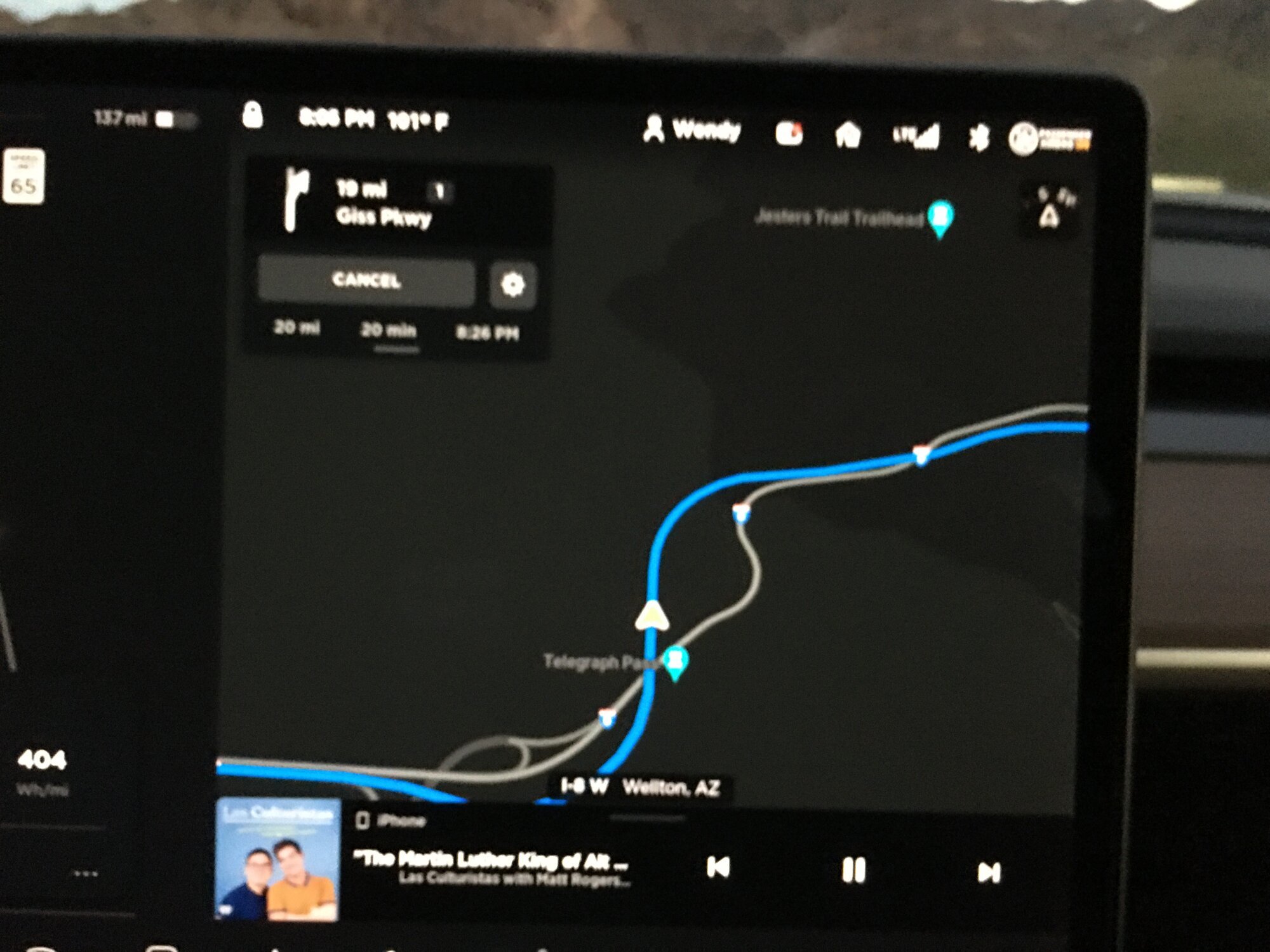

Admittedly these are NOT the greatest pictures, but it's dark and the car is moving. The blue line is the Tesla Nav's actual movement trajectory. The white lines are the Google Maps. These usually coincide on typical city roads, but not on some very dangerous mountain roads where the road winds and curves a LOT. I can easily imagine someone getting confused and steering the car THE WRONG WAY even when it is on autopilot. It is my guess that a collision or accident that occurs immediately after someone pulls you out of autopilot may register as auto pilot related collision.

Could this explain some of the Tesla accidents?

Has anyone else noticed this issue?

I don't ever see such a problem with Waze which also uses the Google Maps data base or even Google Maps driving instructions. It seems to be an issue ONLY with Tesla's rendition of Google mapping.

Admittedly these are NOT the greatest pictures, but it's dark and the car is moving. The blue line is the Tesla Nav's actual movement trajectory. The white lines are the Google Maps. These usually coincide on typical city roads, but not on some very dangerous mountain roads where the road winds and curves a LOT. I can easily imagine someone getting confused and steering the car THE WRONG WAY even when it is on autopilot. It is my guess that a collision or accident that occurs immediately after someone pulls you out of autopilot may register as auto pilot related collision.

Could this explain some of the Tesla accidents?